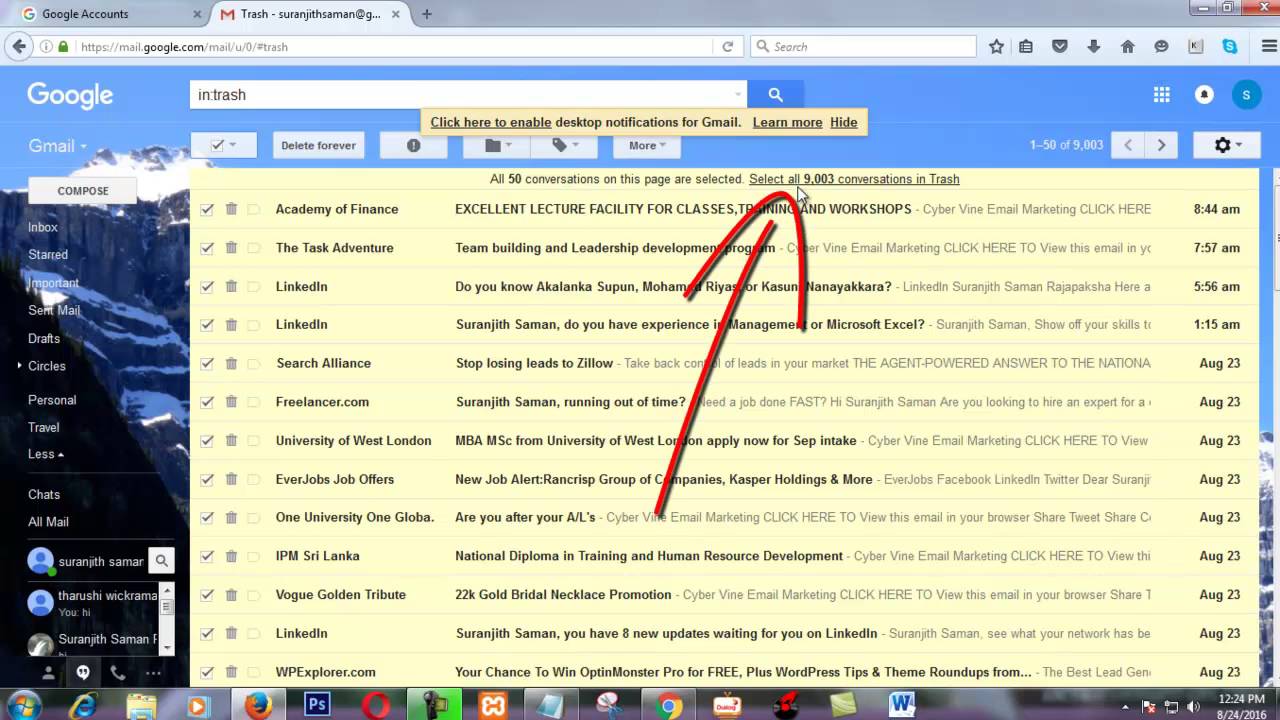

Will format it to NTFS and (hopefully) be golden. Now I am slowly pulling 4TB off the RAID-0 and reformating, cuz no way am I running a zero array without active backups. Gives error code 0x80070032 which when googled with exfat and ntfs shows it to be a minor, but known, issue. Windows File History will not allow an exFAT patitioned drive to be backed up to an NTFS drive. I have seen many give the general advice that any added storage should be exFAT.Īnd so. That exFAT is more compatible with other hardware and thus a good option, etc. Though I have avoided it for years, I have seen *many* on here who espouse the benefits and importance of using exFAT on anything aside from the boot drive. I dont do anythign fancy for backup and stick to just using windows file history on an external through windows 10. This week a built up a RAID as mass storage, with full intent on making sure to setup a backup. I have long time held that for (modern) windows uses you should always use NTFS, and have always stuck to that. Below is a screenshot from Windows Server 2019.TL:DR - Windows 10 File History will not backup between exFAT and NTFS drives. Data Deduplication is part of the File and Storage Services role in Windows Server. Administrators can install Dedup using the GUI Server Manager, Windows Admin Center, or PowerShell. The process to install the Windows Server Dedup feature is straightforward. 30–50% space savings-User documents can contain standard user files that may include photos, music, and videos.50–60% space savings-General file shares can contain monolithic repositories of files that can include a tremendous amount of duplicated data.70–80% space savings-Deployment shares contain massively duplicated data stores of software binaries, cab files, and other operation-specific files.80–95% space savings-Virtualization environments, especially VDI workloads and ISOs for deployment.

What are workloads that typically show massive benefits to using data deduplication? Let's list these in the order of the most significant benefits. Specific use cases lend themselves favorable to data deduplication. Pointers to the chunk store are created to allow redirecting file reads to the appropriate file chunks.These file chunks are placed in the chunk store.Unique chunks of file data are identified.The system breaks the files into chunks.The file system scans storage to find files matching the deduplication optimization policy.To accomplish the successful deduplication of data in accordance with the principles listed above, Windows Server uses the following process:

End users are unaware they may be working with deduplicated data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed